OpenAI published Advancing voice intelligence with new models in the API on May 7, 2026. Read casually, it looks like a standard audio-model update: more natural conversation, better latency, stronger translation. The official page points to a more important shift. OpenAI is combining three pieces that often used to live in separate layers: realtime reasoning, realtime translation, and streaming transcription.

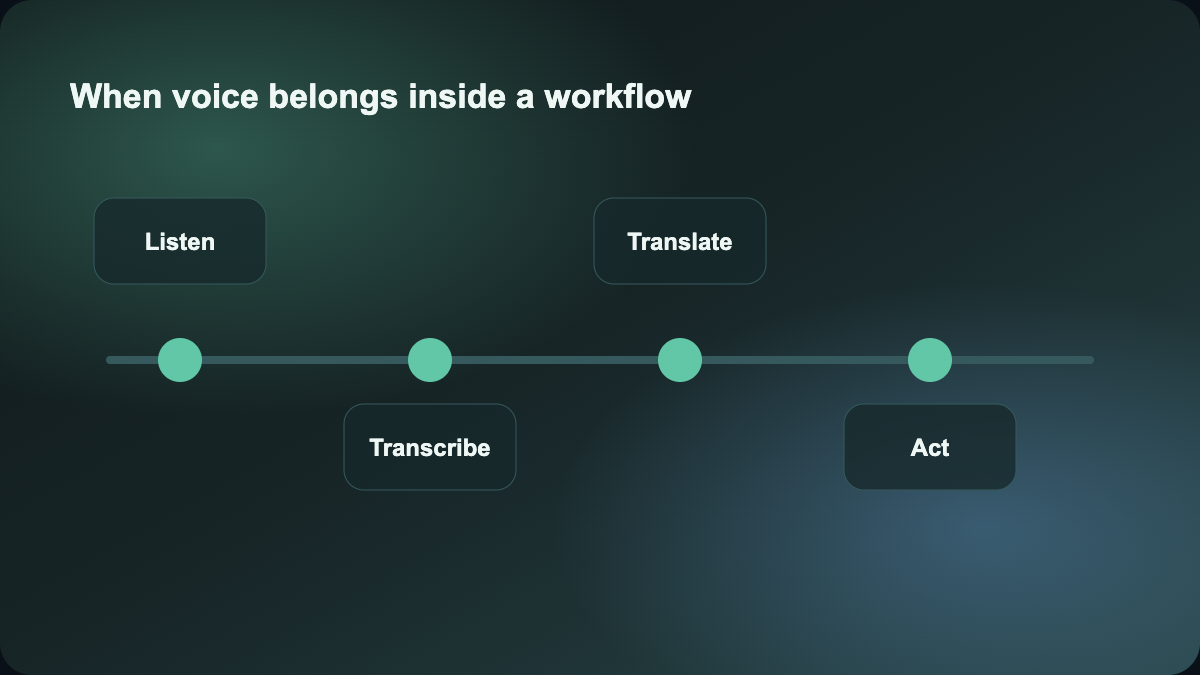

That makes voice look less like a novelty interface and more like a credible workflow entry point. For readers of this site, that matters because the real question is not whether the model sounds smoother. It is whether voice can now sit inside actual task flows.

What changed in this release

The official page introduces three new API models:

GPT‑Realtime‑2, a live voice model built to reason through harder requests, call tools, recover from interruptions, and keep long conversations coherentGPT‑Realtime‑Translate, a live speech translation model that supports more than 70 input languages and 13 output languagesGPT‑Realtime‑Whisper, a low-latency streaming speech-to-text model

The details that matter are not just the names. The workflow-level changes are the real story:

- developers can enable short spoken preambles so users hear the agent is working instead of assuming it froze

- the model can make parallel tool calls while the conversation continues

- the context window increases from

32Kto128K - developers can choose reasoning effort from

minimaltoxhigh

That is meaningfully different from the older “speech recognition plus synthetic speech” stack. It suggests a product layer where voice is not only input or output. It becomes part of a reasoning and action loop.

Why this should not be read as just “better voice”

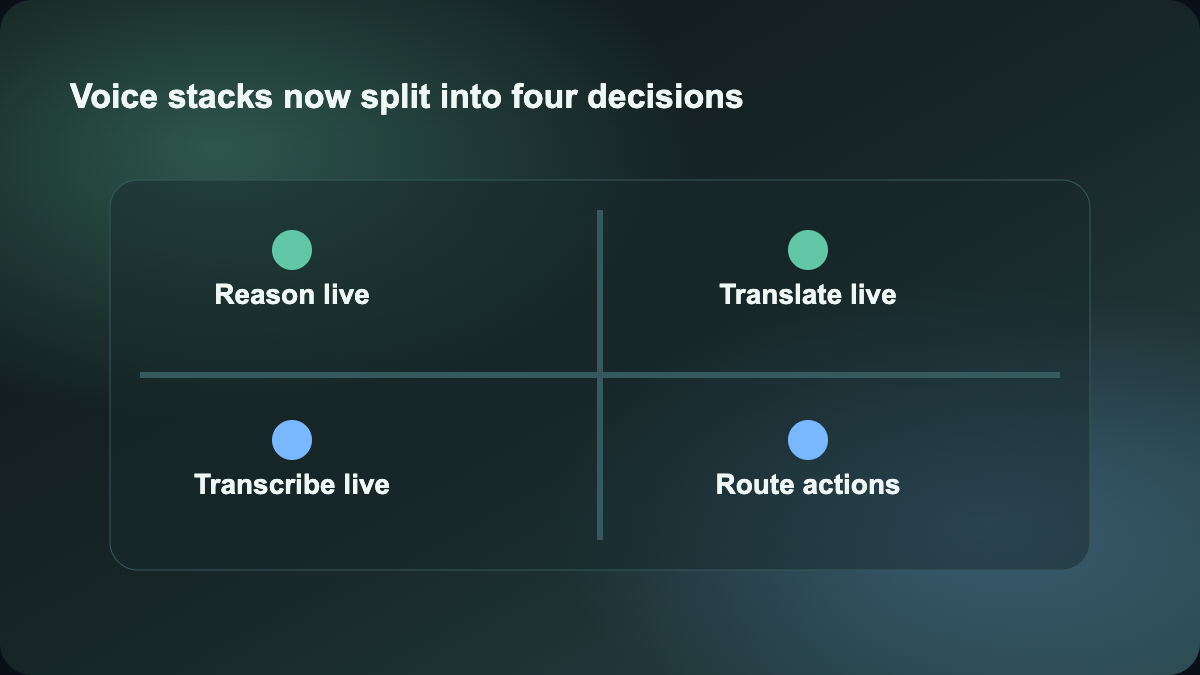

If your first reaction is “so OpenAI has a stronger voice assistant now,” that still undersells the on-site value. OpenAI explicitly frames emerging voice AI into three patterns:

voice-to-actionsystems-to-voicevoice-to-voice

That framing matters because it moves product design away from “can the model hear and answer” toward “can speech trigger and carry real work.” The page itself uses examples across travel, customer support, multilingual service, and product assistance. In other words, OpenAI is treating voice as an operational interface, not just as a conversational feature.

That is also what separates this update from tools like ElevenLabs. ElevenLabs remains stronger as a voice production and output-layer product. OpenAI’s new models are more interesting for teams trying to build live voice agents that reason, translate, transcribe, and call tools in the same loop.

Which teams should care first

The best fit is not entertainment voice production. The strongest early fit is teams that want voice to become the first interaction layer in a real system:

- product teams building voice customer support or voice-guided service flows

- operations teams that want live transcription, summarization, and follow-up actions in the same process

- multilingual support teams that need translation in the moment instead of after the fact

- workflow teams already using tools like Make and considering voice as the front door

The official page also gives enough concrete data to make this more than a vague trend story:

GPT‑Realtime‑Translatesupports 70+ input languages and 13 output languagesGPT‑Realtime‑2is priced at$32 / 1Maudio input tokens and$64 / 1Maudio output tokensGPT‑Realtime‑Translateis priced at$0.034per minuteGPT‑Realtime‑Whisperis priced at$0.017per minute

Those numbers do not mean every team should adopt the stack immediately. They do mean the conversation can move from “this sounds impressive” to “we can now estimate use cases, throughput, and cost.”

How to split roles across tools

If you are designing a voice product or workflow, this release helps clarify the stack:

- ChatGPT and OpenAI’s realtime models are the most relevant for live reasoning, agent behavior, and tool-linked spoken interaction

- ElevenLabs stays stronger as a voice generation and output-layer product

- Make still fits best as the orchestration layer that routes transcripts, intents, and summaries into CRM systems, support queues, notifications, or approvals

That is why this story belongs on this site. It is not another generic model announcement. It changes how teams can think about the voice product stack: who listens and reasons, who speaks well, and who pushes the result into the rest of the system.

Why this made today’s cut

The publication date is not yesterday, but it is still inside the last seven days, the source is official, the facts are concrete, and the site value is strong. It directly helps readers compare voice tooling, agent workflows, and automation layers. That makes it more useful than chasing a fresher but shallower “hot” item with weak workflow implications.

This also explains why the run stops at two articles. The remaining candidates today were either too promotional, too thin, or too far from the site’s core tool-selection value to justify another publish.

Source:

- OpenAI: Advancing voice intelligence with new models in the API