Mistral recently published Remote agents in Vibe. Powered by Mistral Medium 3.5.. If you read it only as another model launch, you miss the more important product signal. The real story is not just Medium 3.5 as a model name or a single benchmark number. The more useful signal is that Mistral is combining the model, a remote coding-agent layer, and Le Chat work mode into a more complete stack.

That matters because it shifts the product question. This is not only about whether Mistral can offer an AI coding assistant. It is about whether long-running coding tasks can start leaving the local IDE and return later as reviewable results.

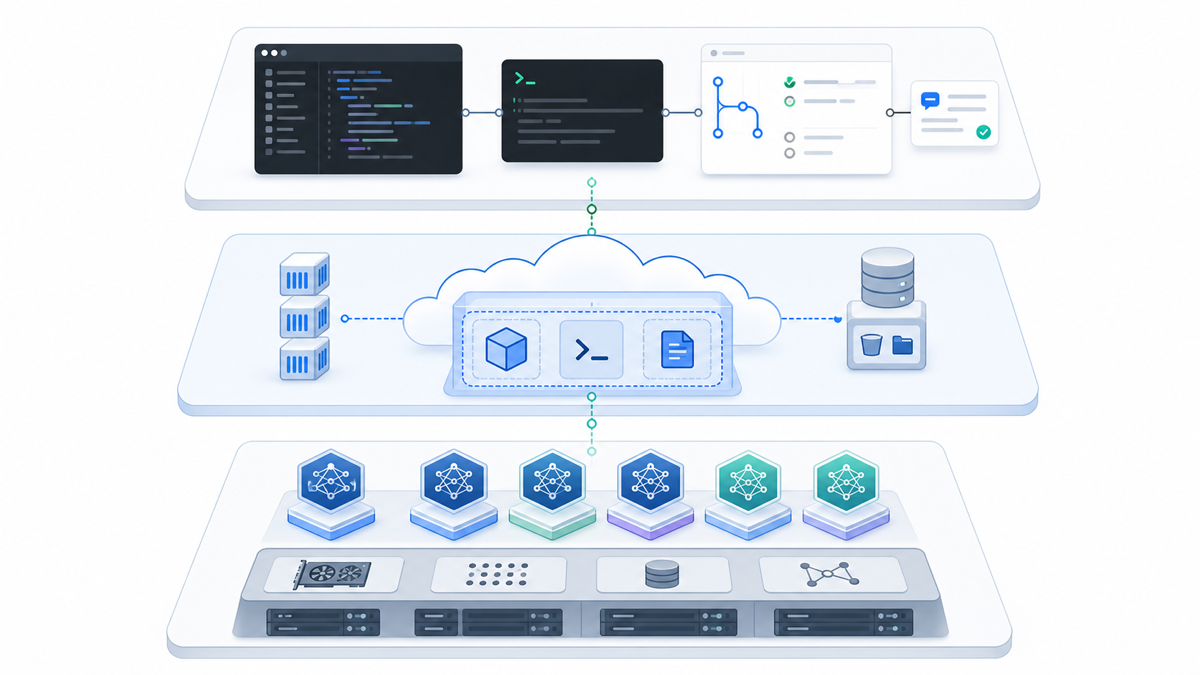

The update is a stack, not a single feature

The official page combines three ideas:

- Mistral Medium 3.5: a 128B dense model with 256k context, plus an official claim of 77.6% on SWE-Bench Verified.

- Vibe remote agents: coding agents that run in remote sandboxes, can be started from the CLI or Le Chat, and can come back with results or even a pull request.

- Le Chat work mode: a continued push from chat UI toward tool use, research, and execution.

Each part alone is understandable. Together, they point to a different way of structuring AI coding work.

Many AI coding tools still assume a human sitting inside a local IDE, asking questions, reviewing edits, and driving every loop manually. Remote agents aim at a different problem: long tasks, multi-step investigations, and work that could run in parallel while the developer does something else.

This is not the same layer as Cursor, Copilot, or chat-first coding help

Readers who already use Cursor or GitHub Copilot should not think about this as a simple one-to-one replacement. These products are increasingly competing at different layers.

Local IDE assistants are still best when the loop is short: editing a function, asking about a specific error, writing a small test, or making a focused multi-file change while a human stays closely involved. Remote coding agents are more interesting when the task is:

- long enough to justify stepping away

- parallelizable instead of tightly interactive

- expected to return a concrete result such as a draft PR, a test fix, a refactor pass, or an investigation summary

- recoverable through logs and artifacts instead of only through live conversation

That distinction is valuable for site readers because it changes how AI coding tools should be evaluated. The next comparison question is not only "Which one writes better code?" It is also "Which tasks should stay local, and which tasks should be handed to a remote agent?"

Which jobs are good remote-agent candidates

Remote agents are not ideal for every coding task. They are strongest when four conditions hold:

- The task is long enough that hands-off execution is useful.

- The work can run in parallel instead of requiring constant human steering.

- The expected output is concrete: a draft PR, an investigation, a batch test fix, or a migration pass.

- Failures can be inspected afterward through logs, artifacts, or diff output.

That makes them more interesting for repo-wide refactors, CI investigations, test generation, migration work, or structured technical debt passes than for tiny interactive edits.

Short-loop tasks are still better inside a local assistant. Quick edits, small debugging loops, and exploratory changes are where a local IDE-native tool still feels faster and simpler.

The biggest lesson is task layering

The most useful takeaway from this release is not the model label. It is the task split.

Many teams currently push every AI coding request through one generic interface. That creates predictable problems:

- small tasks get over-engineered

- long tasks still require someone to sit and wait

- the boundary between local editing help and higher-level agent execution stays fuzzy

- once a task gets large, review and recovery become harder

Mistral's direction suggests a cleaner operating model:

- local assistants handle short editing loops, explanations, and immediate feedback

- remote agents handle longer investigations, broader changes, and work that should come back as a reviewable result

- humans handle the final pull request, review, and risk judgment

That idea matters even if a team never adopts Mistral itself. The same question will apply across the category: which AI tool is best for a local coding loop, and which one is better for long-running remote execution?

Why this made today's cut

This topic comes from an official source, maps directly to AI coding-tool selection, and has strong internal-link value to Cursor, GitHub Copilot, and Claude. That is why it deserves publication.

The one caveat is source visibility: the accessible version of the official page did not clearly expose a publication date in the captured content, so this article avoids stating a precise date for the Mistral announcement and records that limitation in verification notes.

Even with that constraint, it is still a better site fit than a generic leaderboard or media recap. It points to a real product direction: AI coding competition is shifting from better completion alone toward better long-task delegation into remote agents.

Reference:

- Mistral AI: Remote agents in Vibe. Powered by Mistral Medium 3.5.