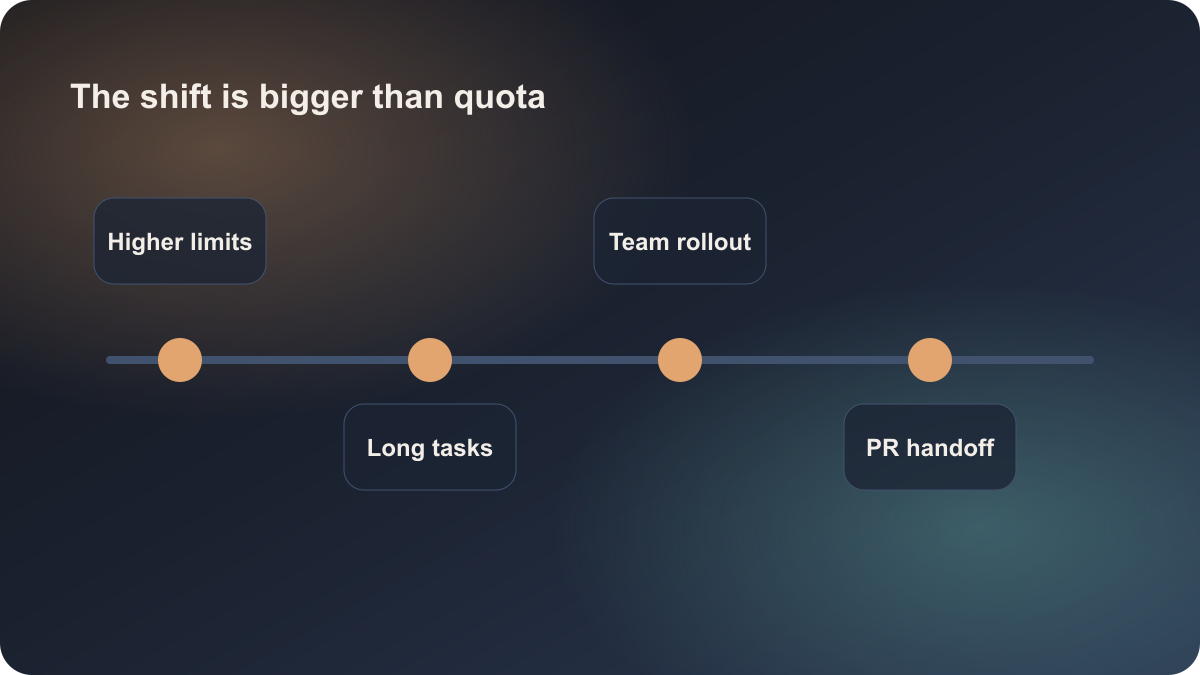

Anthropic published Higher limits for Claude Code, and how Anthropic teams and SpaceX use it on May 12, 2026. If you read it only as a quota update, you miss the part that matters to this site. Higher limits are the surface change. The deeper change is that Anthropic is now describing Claude Code as something that can sit in a longer engineering loop, not just as a personal coding sidekick.

That matters for teams comparing Claude, Cursor, and GitHub Copilot. The decision standard is shifting from “which tool feels best at code generation” to “which tool can hold a longer task, return a reviewable result, and fit into a real engineering workflow.”

What actually changed here

Anthropic combined two signals in one official post. The first is product-level: Claude Code now has higher usage limits, which is only interesting if developers are already pushing it beyond quick editor help. The second is adoption-level: Anthropic teams and SpaceX are explicitly presented as examples of how Claude Code is being used in practice.

That combination changes the meaning of the release. A quota change alone would not be worth an article. A quota change paired with concrete team usage is a positioning update. It tells us Claude Code wants to hold longer work: multi-step debugging, broader repository changes, and engineering tasks that need more than a few quick prompts.

For readers of this site, that signal is more useful than a single benchmark number. Teams rarely get blocked on whether a model can write code at all. They get blocked on what happens once the task becomes long, messy, and expensive to supervise line by line.

Why this is not a simple Cursor or Copilot replacement

The obvious reaction is: does this mean teams should move from Cursor or Copilot to Claude Code? Not really. These tools are now serving different loop lengths.

GitHub Copilot still wins when the goal is low-friction completion inside an existing editor. Cursor is still strong when a developer wants repository context, cross-file edits, and fast human-in-the-loop iteration. Claude Code, in the way Anthropic now frames it, is closer to a longer-running task entry point: hand over a meaningful engineering subtask, let the agent push it forward, then review what comes back.

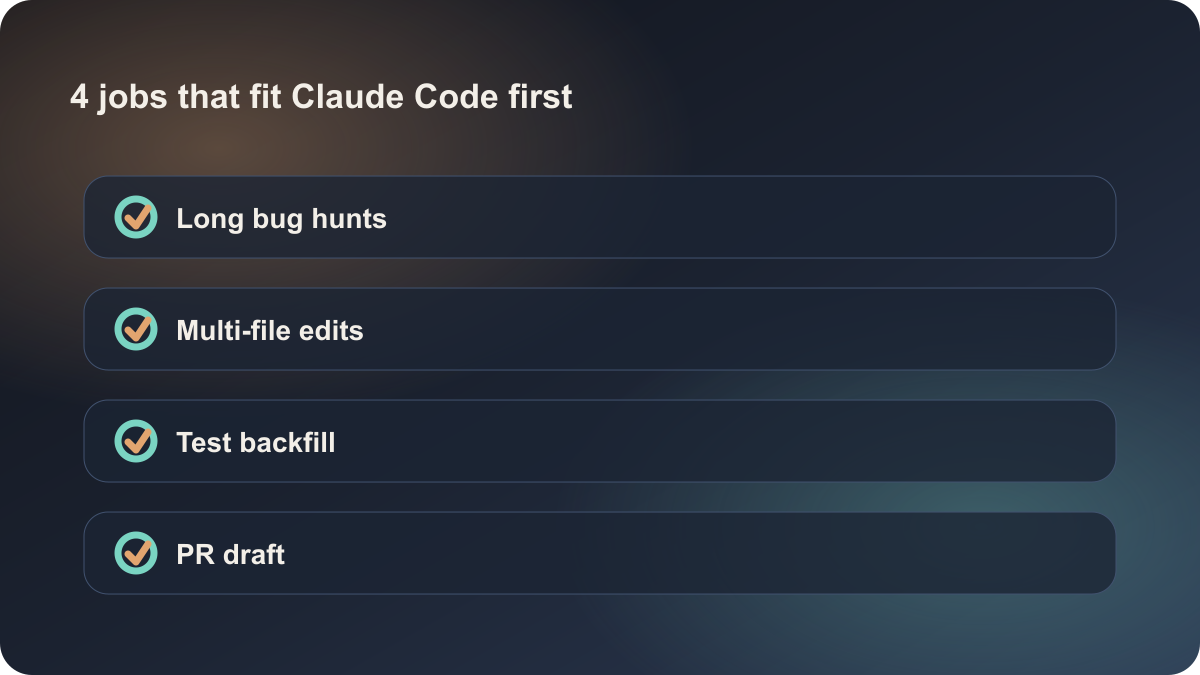

That makes Claude Code more suitable for jobs like:

- cross-file fixes that need multiple attempts

- longer bug hunts that involve logs, tests, and repository scanning

- draft PRs or first-pass changes that an engineer will refine

- command-line workflows where an agent can keep working beyond a short interactive session

Short-loop work still does not need that shape. If you are patching a component, explaining a type error, or adding a small test stub, Cursor or Copilot is usually the cheaper path.

The stronger lesson is task layering

The best takeaway from this news is not “Anthropic raised the cap.” It is that the market is becoming clearer about task layering. Many teams still route every AI coding job through one interface, which creates the worst of both worlds: small jobs get over-managed, while larger jobs still cannot be trusted to run with enough structure.

A more useful model is:

- Short edit loops: keep them in tools like Cursor or Copilot.

- Long execution loops: route them to tools like Claude Code.

- Final acceptance: keep it with engineers, tests, and code review.

That is also why this article links to claude, cursor, and github-copilot instead of defaulting to chatgpt. The search intent here is AI coding workflow selection, not general assistant comparison.

What teams can do with this now

If you are a solo developer, the practical move is not “switch tools today.” It is to redefine which tasks are worth handing to an agent for a longer run. Instead of saying “help me with this broken test,” define the shape: cluster the failures, propose two fixes, then produce the smallest valid diff. Once the task has structure, Claude Code has a much better chance of being useful as a workflow tool instead of a chat novelty.

If you are leading a small team, start with low-risk but long enough work:

- backfilling missing tests

- grouping recurring CI failures

- broad type-error cleanup

- repository-level docs and scaffolding sync

- first-pass refactors with clear validation commands

These are strong pilot cases because they have visible outputs, local verification, and controlled failure cost. That is the right environment to decide whether a longer-running agent entry point is actually improving engineering throughput.

Why this is timely enough to publish today

This article is worth shipping because it comes from an official Anthropic source, it is fresh, and it changes how readers should think about AI coding tools. It is not just a brand post. It is a signal that Claude Code is moving from “personal coding helper” toward “team entry point for longer engineering work.”

That said, it should not be read as a universal winner story. Mature teams will not force every job into a single tool. They will let Cursor handle quick repository edits, Copilot handle low-friction completion, and Claude Code handle longer engineering loops where a result can be returned and reviewed. The question is less “which tool is best” and more “which loop should each tool own.”

Sources:

- Anthropic: Higher limits for Claude Code, and how Anthropic teams and SpaceX use it